everything you need to navigate AI hype

five minutes of foundational context for the argument that's not going away.

The Nuance gives you the foundational context on the things reshaping your world. Every Friday.

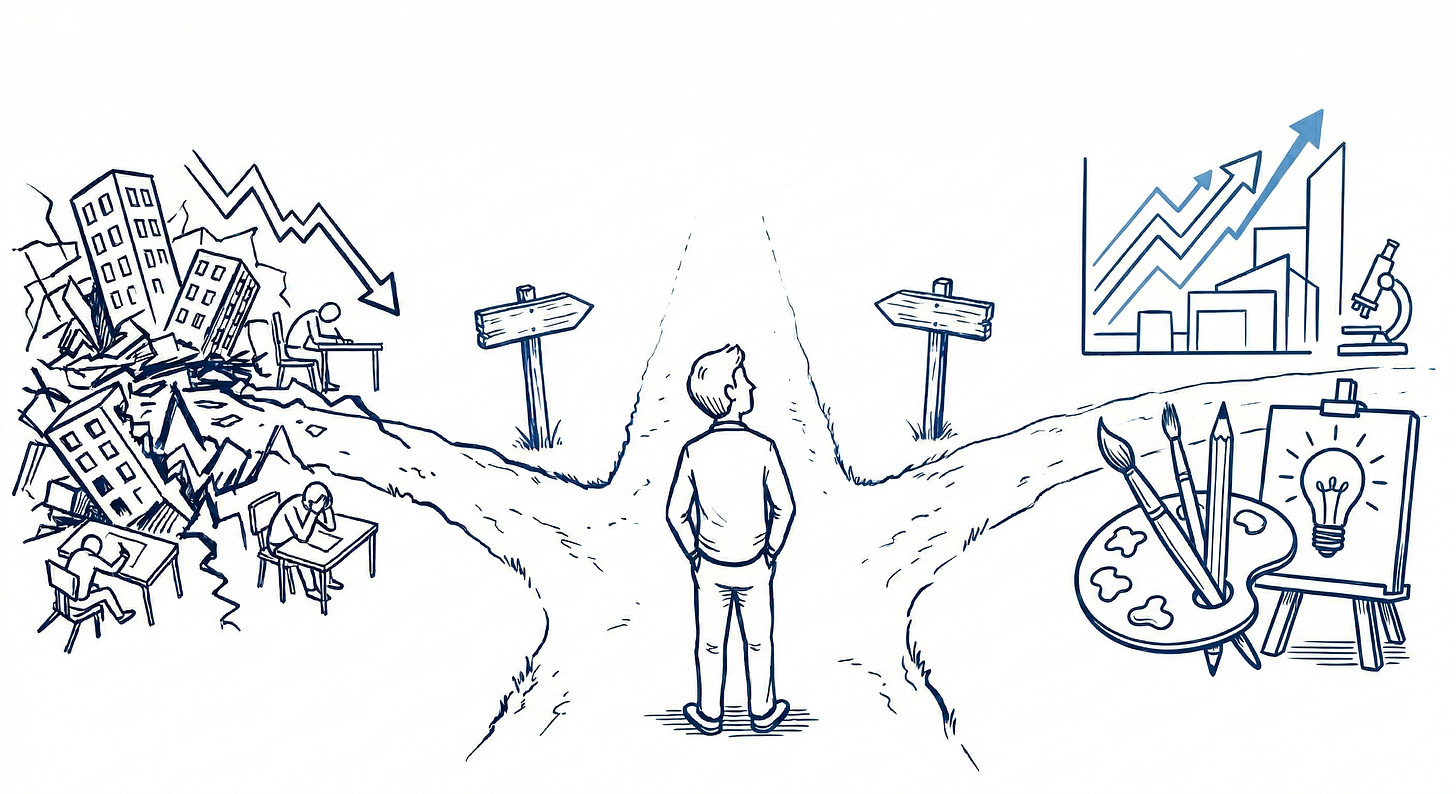

One side says AI is the greatest leap forward in human history — cures, abundance, human potential finally unleashed. The other says we’re building something we can’t control, and the damage is already starting. Here are the lenses worth using when you encounter either argument.

what happened

Two things landed in the last few weeks that put the AI question back center stage for me. First, a documentary called The AI Doc: Or How I Became an Apocaloptimist hit theaters, where a father-to-be interviews the CEOs building this technology and tries to figure out what world his kid is inheriting (relatable). Then early this week OpenAI published a 13-page policy document acknowledging that AI could devastate workers, concentrate wealth, and outpace the institutions meant to govern it — and proposed fixes.

I tend to favor the word “apocaloptimist” as the most honest framing of where we are: genuinely uncertain, trying to hold both realities.

the binary

“This technology is going to lift humanity”

The optimists point to genuine miracles already happening: diseases being caught earlier, scientific hypotheses being tested in days instead of years, tools once reserved for specialists now democratized. The most valid argument doesn’t dismiss that disruption is inevitable; it’s that every major technological shift looked terrifying from inside it. Electricity, the internet, mass production. They all created more than they destroyed. This will too.

“We’re building something we can’t control”

The skeptics’ fears are warranted. This technological wave is different in both kind and degree — it can do white-collar cognitive work at scale, which is new. Past automation displaced factory workers who could retrain for other work. It’s less clear what radiologists, paralegals, and junior developers retrain for. And the companies building the technology have every financial incentive to move fast and figure out the consequences later.

the nuance

The optimists and pessimists are both right, just on different time horizons. The promise is real, and so is the peril. They’re not mutually exclusive — they’re both baked into the same technology at the same time. Feeling fear and excitement is probably the most honest response available right now.

The key lens is power. Who controls the infrastructure? Who writes the rules? Who captures the gains? Every major technological shift in history distributed its benefits unevenly — and the pattern has less to do with the technology itself than with who had a seat at the table when the structure got built. AI is no different. The question worth asking is whether the systems exist to distribute that value broadly, and who’s actually building those systems.

The "slow down" debate is real. When a technology advances faster than the institutions meant to govern it, accountability becomes nearly impossible. The counterargument is that slowing down unilaterally just hands the lead to someone with fewer guardrails. Both are legitimate. A helpful lens: whenever you hear "we can't slow down," ask who's saying it and what they stand to lose if the pace changes.

The companies building this have a financial interest in the hype. OpenAI, Google, Anthropic — their entire business model depends on investors believing in the limitless potential of AI. Which means the most dramatic claims about what AI will do for humanity are also, simultaneously, a fundraising pitch. Keep that in mind when evaluating who’s telling you how transformative this is, and why.

think deeper

The right question isn’t whether AI is good or bad. That framing is already obsolete — it’s here, accelerating, and the people building it are openly publishing documents about how to govern what they’re unleashing.

The question is whether the institutions meant to distribute the benefits and absorb the shocks can move anywhere close to as fast as the technology does.

Historically, they can’t. The Industrial Revolution created enormous wealth. It also created the conditions that made the Progressive Era and the New Deal necessary. That gap between the technology arriving and the institutions catching up is where most of the damage happens.

So the thing worth sitting with is the timing piece of all this. What gets built into this transition now, before the power concentrates and the patterns calcify, versus what we’re left arguing about after the fact?

The OpenAI document is interesting because it’s evidence that even the builders know the window for getting this right is open right now, but won’t be forever.

Think for yourself.

j

The nuance exists to give you the foundational context to make sense of a changing world.

If you found value in it, the most impactful thing you can do is forward it.